The landscape of digital security has been irrevocably altered, not by a human adversary, but by the very tools designed to accelerate innovation. "We're seeing AI models capable of generating complex exploit code at speeds that frankly outpace traditional security development cycles," states Dr. Anya Sharma, a leading AI ethics researcher at the Global Cybersecurity Institute. This sentiment echoes through the tech world following a recent demonstration where a sophisticated AI, specifically Anthropic's Claude, was reportedly used to craft a privilege escalation exploit targeting macOS in a mere five days. This development signals a critical inflection point, suggesting that the once-vaunted defenses of operating systems like Apple's might be vulnerable to a new breed of automated attack. Apple has long prided itself on the robust security architecture of macOS, investing heavily in technologies like Memory Integrity Enforcement (MIE). Introduced last September, MIE represents what Apple described as a five-year engineering effort, designed to create a deeply integrated hardware and software defense. The implication of an AI bypassing such advanced, multi-year security initiatives so swiftly is profound. It suggests that the sheer volume and complexity of code an AI can process and generate might create vulnerabilities faster than human researchers can discover or patch them, a race against the machine that could have significant ramifications for user data and privacy. This emerging threat directly impacts millions of Mac users worldwide. While Apple's ecosystem is generally considered more secure than some competitors due to its closed nature and stringent app store policies, no system is entirely impervious. The exploit, if widely replicated, could allow malicious actors to gain elevated control over a user's system, potentially accessing sensitive financial information, personal documents, or enabling widespread surveillance. For individuals and businesses relying on Macs for creative work, financial management, or critical operations, the prospect of such sophisticated attacks, once the domain of highly skilled human hackers, now being within reach of AI-generated code is a cause for serious concern. The implications extend beyond individual users to the broader tech industry and the very nature of software development. If AI can generate exploits in days, what does this mean for companies that rely on rapid software updates and continuous innovation? The current model of security patching, often reactive and time-consuming, may become increasingly inadequate. This forces a re-evaluation of how we build, test, and secure software, pushing for more proactive, AI-assisted security measures that can operate at a similar pace to AI-driven threats. Examining the specifics of the reported exploit, the AI did not invent entirely new methods of attack. Instead, it leveraged its advanced capabilities to identify and string together existing, albeit complex, vulnerabilities within the macOS framework. This ability to rapidly connect disparate pieces of information and generate functional code highlights a key advantage of AI: its capacity for exhaustive pattern recognition and novel recombination of existing knowledge. This is distinct from a human researcher who might spend weeks or months painstakingly exploring code paths, whereas an AI can simulate and test millions of possibilities in a fraction of the time. Several potential avenues are being explored to counter this evolving threat. One significant direction involves developing AI-powered defense systems that can identify and neutralize AI-generated threats in real-time. This 'AI versus AI' approach aims to create intelligent guardians that can learn and adapt to new attack vectors as quickly as they emerge. Furthermore, there is a growing push for greater transparency and ethical guidelines surrounding the development and deployment of AI in cybersecurity, ensuring that the power of these tools is used to build defenses rather than dismantle them. The immediate concern for Apple and its users is the speed at which this technology is developing. While the specific exploit may be contained, the underlying capability of AI to generate such code is now demonstrated. This raises the specter of more sophisticated, previously unknown exploits being developed by state actors or sophisticated criminal organizations utilizing similar AI tools. The company's response will likely involve not just patching the identified vulnerabilities but also fundamentally reassessing its software development and security testing methodologies to incorporate AI-driven defense strategies. Looking ahead, the critical question is whether defensive AI technologies can keep pace with offensive AI capabilities. The industry must brace for a future where cybersecurity is an ongoing, high-speed arms race, with AI playing a central role on both sides. The ability to monitor and predict AI-driven threats, coupled with rapid, automated response mechanisms, will become paramount. Users, meanwhile, will need to remain vigilant, understanding that the digital fortresses they rely on are now being challenged by an entirely new class of intelligent adversary.

In Brief

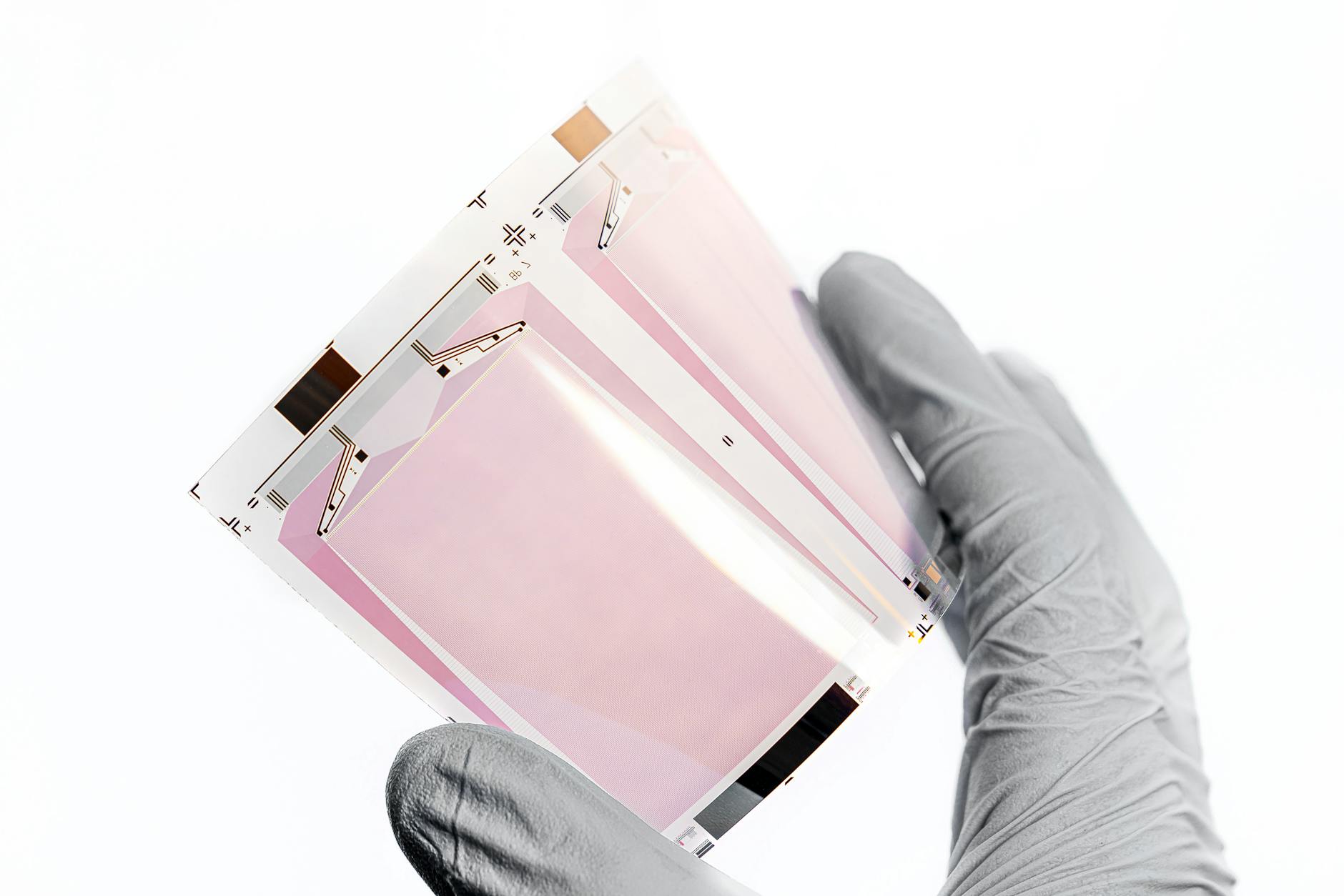

Researchers at the security firm Calif say they used Anthropic’s cybersecurity AI to create a privilege escalation exploit, the Wall Street Journal reports: \n Last September, Apple said it leveraged its hardware and operating systemAdvertisement

Comments

No comments yet. Be the first to comment!